International Conference on Intelligent Robots and Systems (IROS) 2024 Oral!

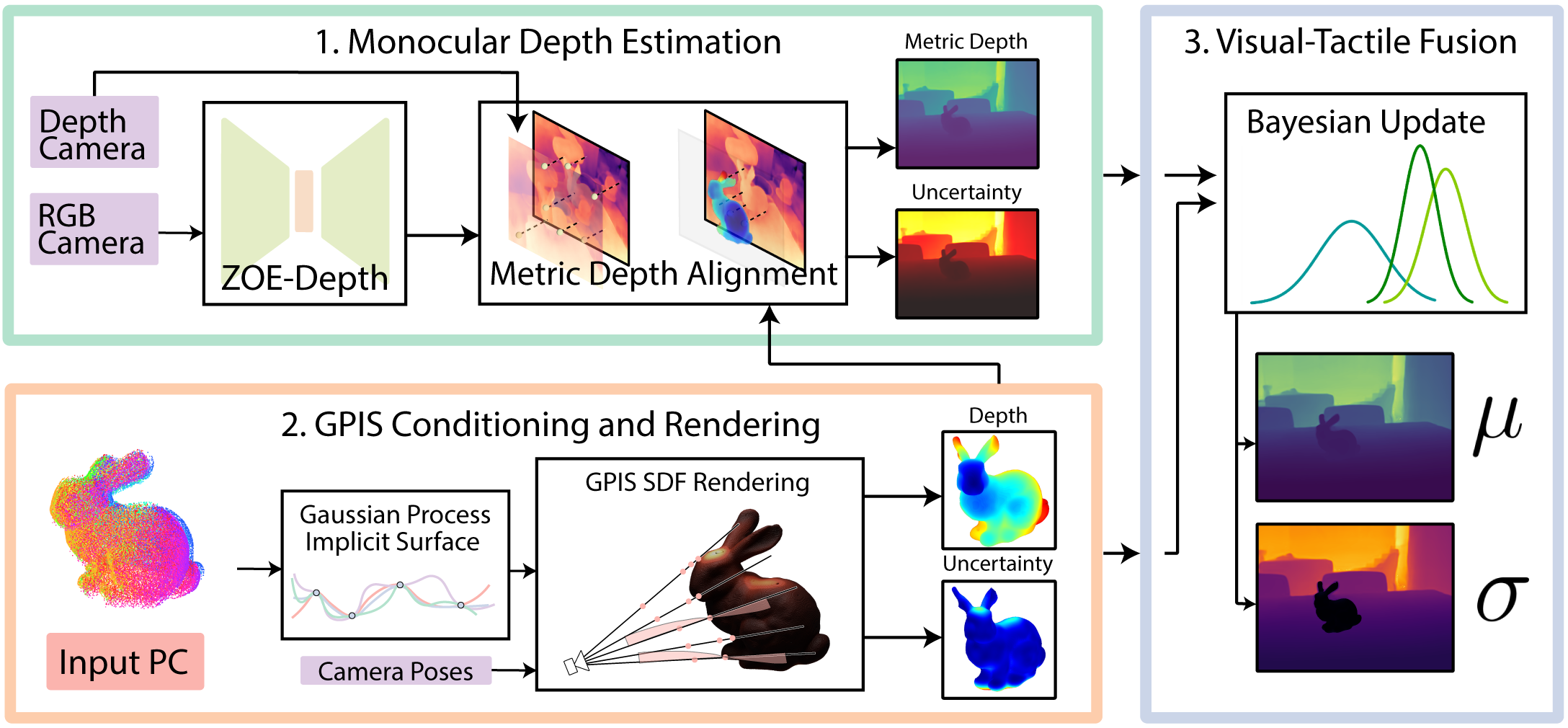

This repo houses code and data for our work in Touch-GS.

The pipeline has been tested on Ubuntu 22.04.

- CUDA 11+

- Python 3.8+

- Conda or Mamba (optional)

Install PyTorch with CUDA (this repo has been tested with CUDA 11.7 and CUDA 11.8) and tiny-cuda-nn.

cuda-toolkit is required for building tiny-cuda-nn.

For CUDA 11.8:

conda create --name touch-gs python=3.8

conda activate touch-gs

pip install torch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 --index-url https://download.pytorch.org/whl/cu118

conda install -c "nvidia/label/cuda-11.8.0" cuda-toolkit

pip install ninja git+https://github.com/NVlabs/tiny-cuda-nn/#subdirectory=bindings/torchSee Dependencies in the Installation documentation for more.

Repo Cloning To clone the repo, since we have submodules, run the following command:

git clone --recurse-submodules https://github.com/armlabstanford/Touch-GSWe have implemented our own GPIS (Gaussian Process Implicit Surface) from scratch here!

Please follow the steps to install the repo there.

You can find more detailed instructions in Nerfstudio's README.

# install nerfstudio

bash install_ns.shWe have made an end-to-end pipeline that will take care of setting up the data, training, and evaluating our method. Note that we will release the code for running the ablations (which includes the baselines) soon!

To prepare each scene:

- Install Python packages

pip install -r requirements.txt- Real Bunny Scene (our method)

bash scripts/train_bunny_real.sh- Mirror Scene

bash scripts/train_mirror_data.sh- Prism Scene

bash scripts/train_block_data.sh- Bunny Blender Scene (processing to a unified format in progress)

The renders on the test set are created in the main script under exp-name-renders within touch-gs-data.

You can get rendered videos with a custom camera path detailed here. This is how we were able to get our videos on our website.